1. Approach

The programming of an embedded application is done usual in C, better in C++. C/++ is proper for machine near programming, hardware access, fast immediate hardware access from algorithms, optimal programming in calculation time. For example inline operations are expanded immediately in a longer algorithm without overhead. For example specific pointer to hardware addresses allows hardware access without including hardware specific files. The Hardware Abstraction/Adaption Layer (HAL) is used on C/++ language level.

But the high level programming should be done graphically. This is the important approach to bring the software more near to the steakholder, which want to use the software. C/++ programming is not appropriate to explain how the software works.

An important method is dividing Software in modules (and components). A module can be seen from side of a user as black box. The functionality of the module may be well understandable. How this module is implemented, it is a specialism of experts (for embedded hardware etc.). The user don’t want to know how all is implemented, he want to know how the things of his focus are combined and formed, who he can influence.

The other side is test, verification. The software should run on the target. It should be tested on the target.

A functional test of the whole system is necessary, to demonstrate and explain things with the view of the stackholder. This functional test should be done on graphical level.

But the core functionality of the black box modules (from view of the user) should be usual immediately test on and with the target system.

Hence, a two-stage thinking is advisable:

-

Core functionality, as black box for an end-user, test and implement near the target.

-

Application functionality, obviously for the end-user, test and implement graphically.

2. Simulink as graphical development tool

Simulink fulfills two approaches:

-

Simulate an environment, test of algorithm in conclusion with the environment.

-

Code generation for a target from that part of the model, which describes the target functionality.

Some graphical programming environments are specific designed for the graphical programming itself. But this is only one aspect, may be the lesser one, of Simulink. Simulink is able to simulate complex physical relationships. It is excellent to study a software idea in a given environment without necessary of the existence of the real environment, a "digital twin".

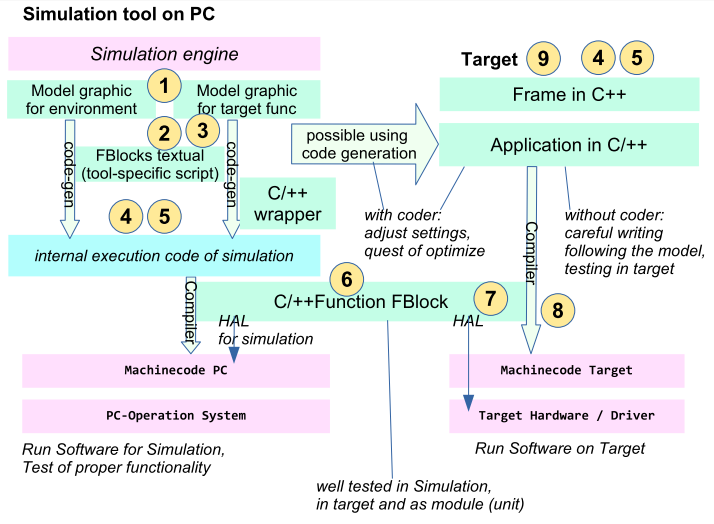

Simulink can fulfill the incipiently named approach of using core functionality in target language, using S-Functions. The principle is shown in all Matlab documentation, also in GenSfn_SmlkApproaches.html.

Another discussion is "using graphical programming versus textual programming lines". Textual programming, for modules (as black block) has some advantages independent of the question whether it is the target language. Mathworks prefers usage of its own Matlab script language for textual FBlocks. It is more simple than using S-Functions in C/++, because it is immediately integrated in the Simulink programming world. S-Functions in C/++ need some more complex environments to run. An automatic code generation from the graphical model inclusively the Matlab scripts in FBlocks is supported and integrated.

This approach is possible, but it does not regard the idea of development and test of modules near the target.

3. Modelica as graphical development tool

Modelica has not yet a comparable powerful target code generation capability. But the possibilities and approaches to include Function Blocks with original C/++-code are similar.

It means Modelica (Open-Modelica) can be used with the adequate approach including core modules in the original target language, as it is shown in the image in the next chapter. Only the "possible using code generation" can be either not used, or used in another way.

The mechanism to include C/++ codes in Modelica are described also in ../../../mdlc/html/FB_Clang.en.html.

4. Other tools than Simulink and Modelica

The adequate approach is used from the author for example in a Simatic S7-environment. The textual 'near target' language is there SCL (adequate Structure Text normed in IEC61131-3). The graphical programming is done either in FBD (Function Block Diagrams of IEC61131-3) or maybe also in CFC (Continuous Function Chart, see https://en.wikipedia.org/wiki/Continuous_Function_Chart). In the past the author has worked with STRUC-G (Siemens Simadyn-D, nevertheless no more found in the internet though it is currently existing in plants) - with the adequate approach. The FBlocks were programmed always in C language, the graphical modeling has connected and parametrized this FBlocks.

5. Test approaches

Test is important. Test is diverse:

-

1) Test to demonstrate defined functionality to explain.

-

2) Test of the functionality itself, the correctness of the approaches.

-

3) Test of components.

-

4) Test of defined functionality as test cases, which should be fulfilled.

-

5) Test of random situations to test the robustness.

-

6) Test of modules, from the view of functionality.

-

7) Test of modules, in the target implementation.

-

8) Test of special problems of the target implementation.

-

9) Test of the whole application in the target.

Any of this test approaches are important, maybe with independent aspects.

5.1. Test to demonstrate defined functionality to explain

This can be done from simulation level. Some modules (seen as black box) may be implemented near the target (C/++-Functions, in Simulink the S-Functions) or with adequate functionality in the simulation tool language itself, maybe graphical or as script. Last one has some maybe necessary advantages. For example looking inside the functionality, explain it, study it to find a proper target implementation approach.

This test can be done also with abstraction of details, for example in an earlier phase of development, to find out the requirements of the application.

5.2. Test of the functionality itself, the correctness of the approaches

This is the test level only using the simulation tool. Both, the dedicated test cases and the random tests can be executed with adequate stimuli and environment simulation. Some black boxed modules don’t need to be original, only its functionality should be correct. It mains, C/++-Functions can be firstly replaced.

This test is important because it is done both with the eyes of the requirements of the application and the eyes to the internal functionality. Last one: Values and signals inside the application can be evaluated and checked against the expected behaviour. First one: Often the really requirements can be determined only with experience with the result.

5.3. Test of components

The test of a component (containing some modules) is proper to define their interface, to describe their functionality. A complex application should consist of some preferably independent components. It is important to better understand an application.

The components can be reused in other applications too. Especially from this reason their interface and functionality should be well designed.

Components should be defined on graphical modelling level.

5.4. Test of defined functionality as test cases, which should be fulfilled

This can be done in an earlier phase as well as in the end phase to test how the modules work together in the application with several stimuli.

For this tests it is not important whether modules, which are able to see as black box, are implemented in a proper replacement in the simulation tool, or near the real target. It is a functional test, not the test with the target. If a black box fulfills its interface, it is not important how it is implemented.

But the same test cases can be used with the 2) (functionality) and with the 10) (in the target).

5.5. Test of random situations to test the robustness

It is an approach which can and should also checked in 2) (functionality) as well as with 10) (in the target).

Dedicated test cases may not include all situations which can occure in practice. The test cases may incomplete. They should be completed, it problems are detected. But originally, the problems may loosing sight of it. Thats why such random tests are important.

5.6. Test of modules, from the view of functionality.

Modules can be seen as black box. Hence its functionality should be tested independent of its usage in components or in an application.

A module can be implemented for example only with simulation tool elements, either graphical or as script, to study the functionality independent of target aspects. But if a module should fulfill specific implementation aspects, such as Hardware Adaption/Abstraction Layer usage (Hardware access) or specific Operation System access, dedicated modules can be written in C/++ and can be used as S-Function in Simulink or C/++-Functions in Modelica.

5.7. Test of modules, in the target implementation

A module is often implemented with deep dependencies to target properties, it should run on the target. Hence it should be tested independently of the application under target conditions.

From a C/++-based FBlock in the graphical modeling environment the original C/++ code should be tested in the target environment. But it is of course independent of the application, in a "test bed" on target.

It is important that the C-Code is the same as in usage as S-Function. The necessary adaption of hardware accesses is tested in original in this point, the same Hardware Abstraction Layer (not the Adaption/Implementation Layer) is used in 6), in the S-Functions. Same also with the Operation System Adaption Layer.

5.8. Test of special problems of the target implementation

That is the difference between 4) and 5).

-

Real timing conditions

-

Proper interaction with the target running system (operation system, interrupts etc.)

-

Specific properties of a processor, on arithmetic overflow etc.

-

Exception handling and its timing.

-

Any hardware effects, access times, drops.

This tests can be evaluate before or while testing the whole application on target (5).

5.9. Test of the whole application in the target

Any test not on the real target in the real environment is insufficient. Only the test under original conditions can guarantee that it is ok.

It is not sufficient to argument "The functional test is succeeded, the tools to generate the target are all qualified, hence the target works". It is a self-deception. A user should only accept a solution which is tested under original conditions.

The test in the target can use environment simulations done with the simulation tool. If more real time is need, for example Simulink offers some possibilities: Running in a fast hardware platform, also implementing in a powerful FPGA environment for fast real time etc. Other tools may be adequate.

This approach is named "Processor in the loop" from view of Test with Simulation. Of course the test under real conditions uses the same target.

The test in the target can be dispersed in two views:

-

a) Test of the C/++-Sources, after code generation the generated sources

-

b) Test of the machine code.

Only b) should be accepted as end test. But a) may be a point of interesting if effects of C/++ programming should be evaluated. For this reason the implementation of the C/++ sources to test can be done also in a PC environment with better access to internals, using a PC-based IDE (Integrated Development Environment). Really of course the machine code b) ist used to test, but this environment may be better to debug. Exactly real time may not be necessary.

With the same approaches b) can be done using several targets, maybe with the end-product processor, or with an more powerful processor which offers more test access possibilities but the same real time aspects and same hardware capabilities.

6. What does the test approaches means for modules as S-Functions in Simulink

The FBlocks which are implemented in target language (C/++) can be also presented by a Simulink implementation. They are black boxes from view of the using application. Implementation in Simulink, especially in an earlier phase, can help to clarify the functionality.

It this functionality is tested as modul test on target, only the C/++ sources are used to test, not its representation as S-Function in Simulink.

If this C-Sources are used also to simulate on PC in Simulink, the same sources should be used. This approach is that of emC, "embedded multiplatform C/C++". The adaption to specific properties of the target, which are not present on PC, are fulfilled from HAL (Hardware Abstraction/Adaption Layer). Adaption to the operation system (Threads etc) are fulfilled using the OSAL (Operation System Adaption Layer).